Monitoring and evaluation can be challenging for all organizations. As organizations grow and change, so must their systems for evaluation. Prior to this year, Engineers Engineers Without Borders USA (EWB-USA) had a monitoring and evaluation (M&E) system in place, but they were ready to take it to the next level. They wanted to create a more integrated system across the organization, streamlining the process for volunteers, and linking EWB-USA’s work more directly to the Sustainable Development Goals (SDGs).

EWB-USA recognized that to design an evaluation system that could work across diverse programs and varied project types, they would need experienced evaluators with a broad array of skills. Sourced from within the Posner Center community, they selected a collaborative team of consultants who have been working intensively with EWB-USA staff to design a streamlined new Planning, Monitoring, Evaluation and Learning (PMEL) system. Led by Kurt Wilson from EffectX, the team also included Sophia Friedson-Ridenour (Development Praxis), Paul B Collier, and Julie Mandolini-Trummel (Kimetrica). This team brought strong skills in a variety of arenas – outcome mapping, qualitative research, Salesforce, evaluation in complex environments, and more – to the challenge of evaluating EWB-USA’s contributions to key development issues.

An Overview of the New System

In mid-November, the team of evaluators presented a high-level overview to EWB-USA staff at the Posner Center. The overview was a synthesis of the process through which a new Planning, Monitoring, Evaluation and Learning (PMEL) system was developed as well as a roadmap of the work still to come. The evaluation team started by building on the existing evaluation processes at EWB-USA, exploring the many indicators across EWB-USA’s project types, from water supply and sanitation to agriculture and energy. Then they conducted an intensive literature review using peer-reviewed journals and other globally-established standards to identify key criteria and standards for each project type. Working from these demonstrated outcomes, they reverse-engineered EWB-USA’s PMEL system to capture these outcomes at a project level, more closely aligning their evaluation system with global standards and best practices.

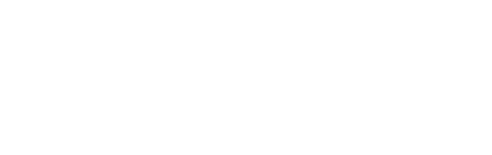

There are two central changes in the new PMEL system. First, there is a more holistic approach to evaluation across EWB-USA’s main programs. In particular, the framework captures the contributions to communities via engineering projects as well as the learning and skill development of their volunteers. This emphasis reflects EWB-USA’s dual mission and further integrates the community-facing and volunteer-facing aspects of their work.

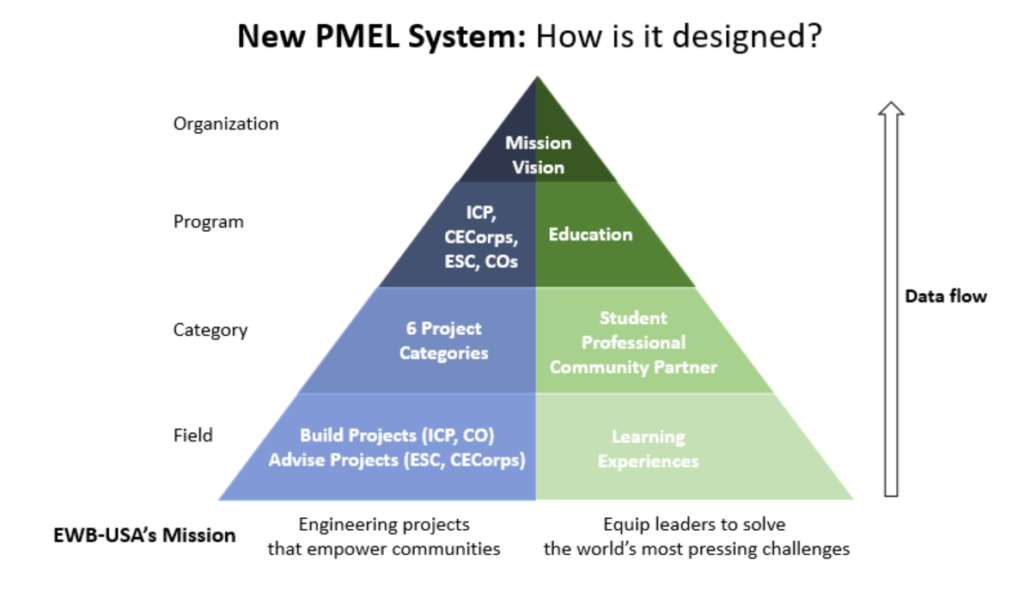

The second major change involves the detailed mapping of all EWB-USA projects and project types onto the Sustainable Development Goals. While projects focused on water, sanitation, agriculture and more could be said to touch upon nearly all of the SDGs, the evaluation team worked to establish direct relationships between each of EWB’s project types and specific SDGs. An essential new distinction they are making is that EWB’s work contributes to each of those established goals. The verbiage of contribution versus causation is an essential one, and one that generated some debate in the room. While causation is a more powerful term, it is much more complex to establish causation in complex environments and many claims of causation in the development world are not properly supported by the data. Though contribution may sound more muted – especially for those in donor-facing roles – EWB’s ability to assess and confidently state their contribution to a set of outcomes is much higher.

What’s Ahead

The new PMEL system has been built into EWB’s Salesforce and is currently in a sandbox environment, which means that users can test it without any impact to EWB’s existing data. Starting late November or early December, EWB will launch into the beta testing phase. They’ll use staff and volunteer experience to refine and complete production of the system early in 2019, with full training and roll out by the end of Q1.

There is still lots of work, learning, and iteration to really bring this new system to life throughout the organization and across their expansive volunteer network. Though they are at the front end of testing and using the system, a tremendous amount of work, design, and development has already been completed and promises a more simplified, integrated, and robust approach to evaluation at Engineers Without Borders USA.